DGM lib

DGM lib

Machine Learning

with

Conditional Random Fields

Introduction

Introduction

DGM is a cross-platform C++ library implementing various tasks in probabilistic graphical models with pairwise or complete (dense) dependencies. The library aims to be used for the Markov and Conditional Random Fields (MRF / CRF), Markov Chains, Bayesian and Neural Networks, etc. Specifically, it includes a variety of methods for the following tasks:

- Learning: Training of unary and pairwise potentials

- Inference / Decoding: Computing the conditional probabilities and the most likely configuration

- Parameter Estimation: Computing maximum likelihood (or MAP) estimates of the parameters

- Evaluation / Visualization: Evaluation and visualization of the classification results

- Data Analysis: Extraction, analysis and visualization of valuable knowledge from training data

- Feature Extraction: Extraction of various descriptors from images, which are useful for classification

DGM is released under a BSD license and hence it is free for both academic and commercial use.

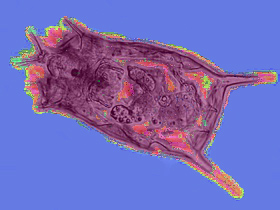

Application Examples

Application Examples

If you have applied the DGM library in your work and achieved some interesting results, please send them to me via sergey.kosov@project-10.de, so I could allocate them at this web-page. This will help us to spread and improve the library. We thank you in advance for your support.

Downloads

Note By installing, copying, or otherwise using this software, you agree to be bound by the terms of its license. Read the license.

DGM in Publication

DGM in Publication

To reference DGM in a publication, please include the library name and a link to this website [BibTeX]. You may also want to include the library version, since we currently update the software.

Citations

Citations

If you use this software in a publication, please cite the work using the following information:

Sergey Kosov. Direct graphical models C++ library. https://research.project-10.de/dgm/, 2013.

or using the BibTeX file.

Introduction

Introduction Quick Links

Quick Links