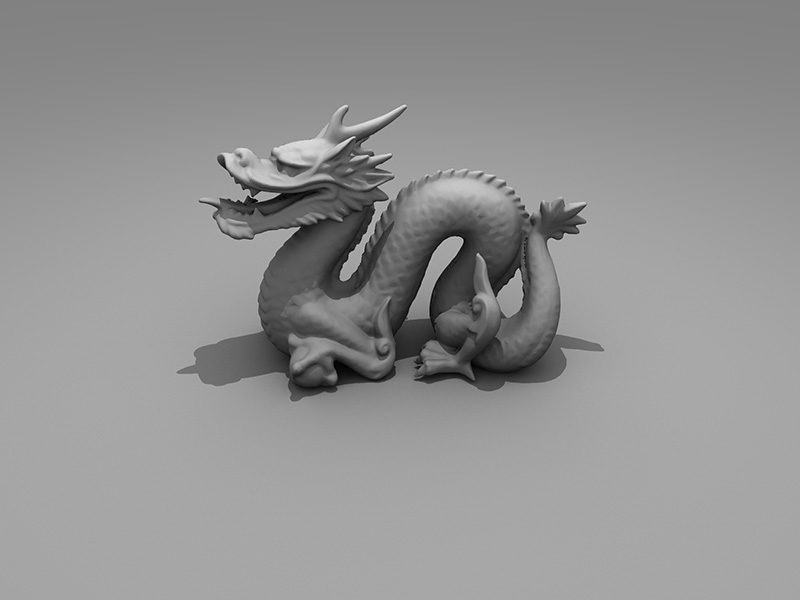

Open-Source Ray-Tracing library

Introduction

OpenRT is a C++ ray-tracing library, which allows for synthesis of photo-realistic images. First of all, the library is developed for academic purposes: as an accompaniment to the course on computer graphics and as a teaching aid for university students. Specifically, it includes the following features:

- Distribution Ray Tracing

- Global Illumination

OpenRT aims for a realistic simulation of light transport, as compared to other rendering methods, such as rasterisation, which focuses more on the realistic simulation of geometry. Effects such as reflections and shadows, which are difficult to simulate using other algorithms, are a natural result of the ray tracing algorithm. The computational independence of each ray makes our ray-tracing library amenable to a basic level of parallelisation. OpenRT is released under a BSD license and hence it is free for both academic and commercial use. The code is written entirely in C++ with using the OpenCV library.

Features

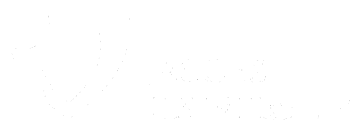

Anti-aliasing

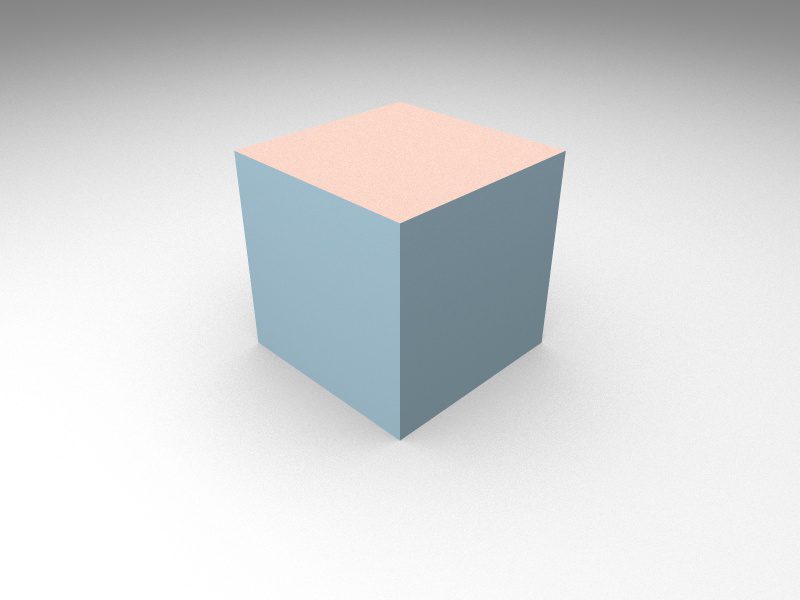

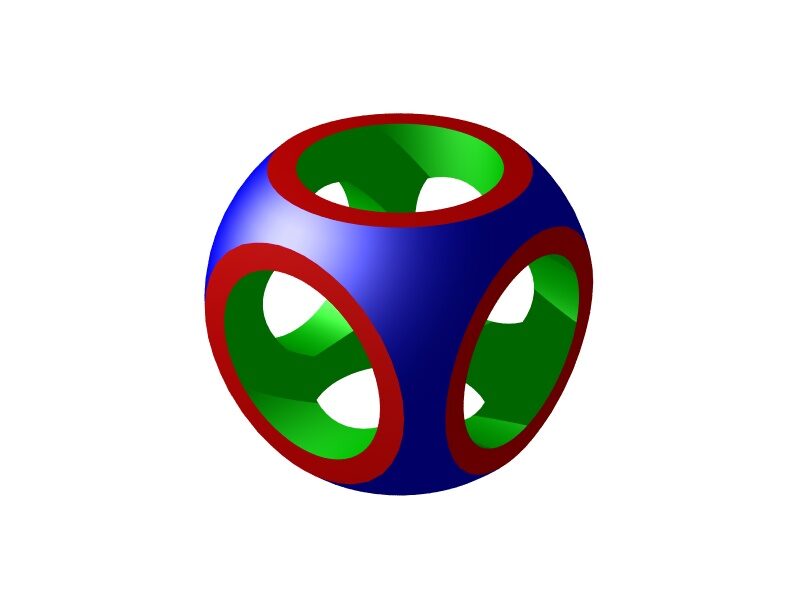

Constructive solid geometry (by Otmane Sabir)

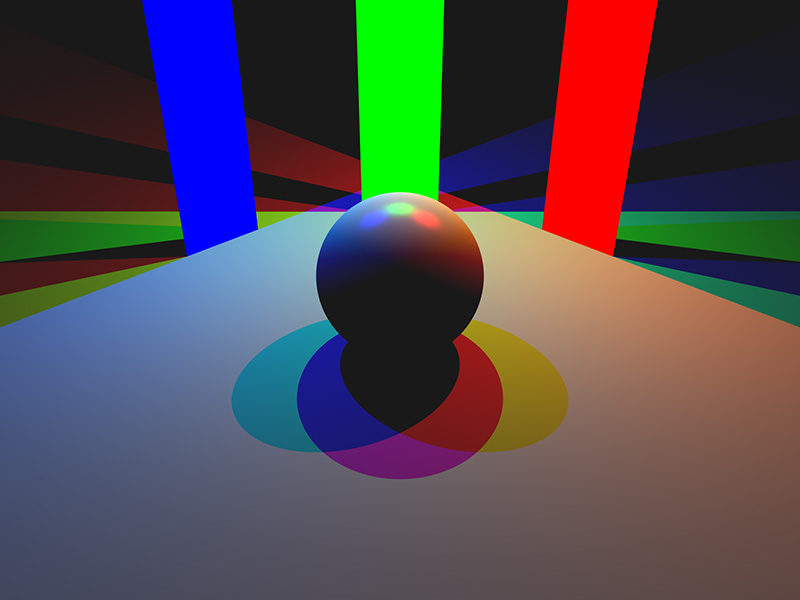

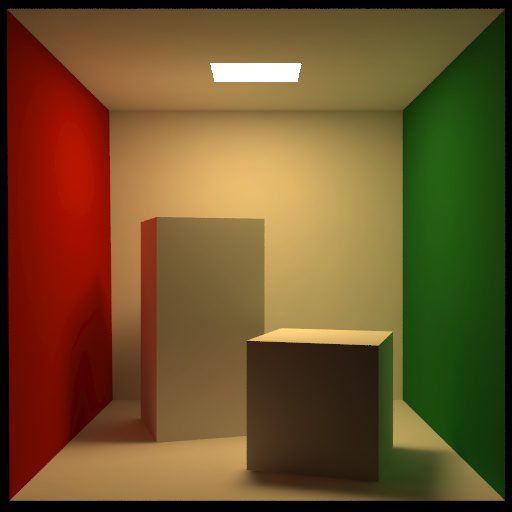

Area Lights

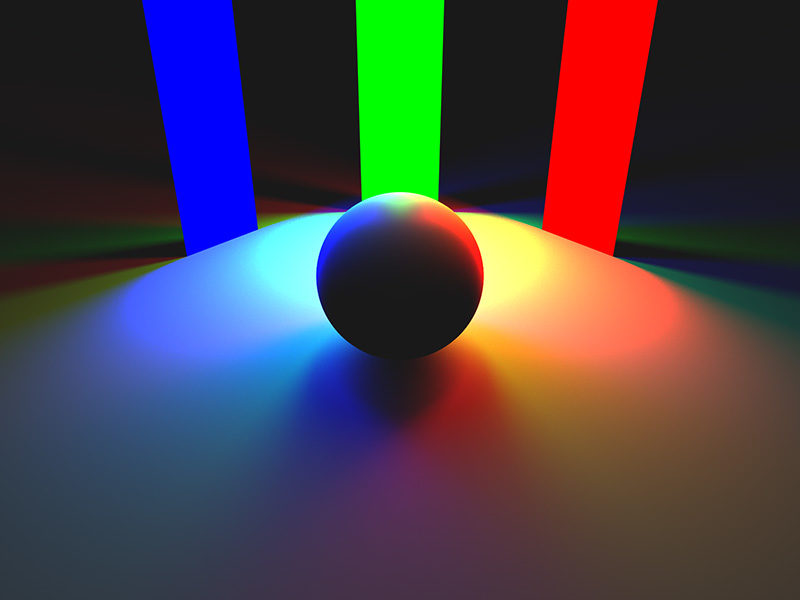

Ambient Occlusion

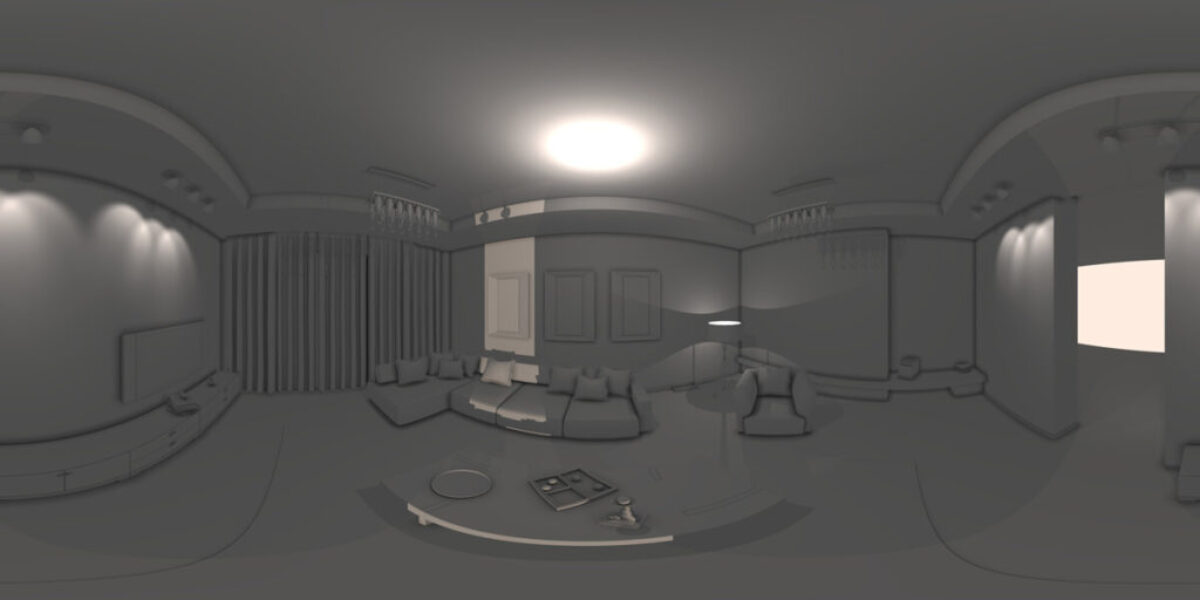

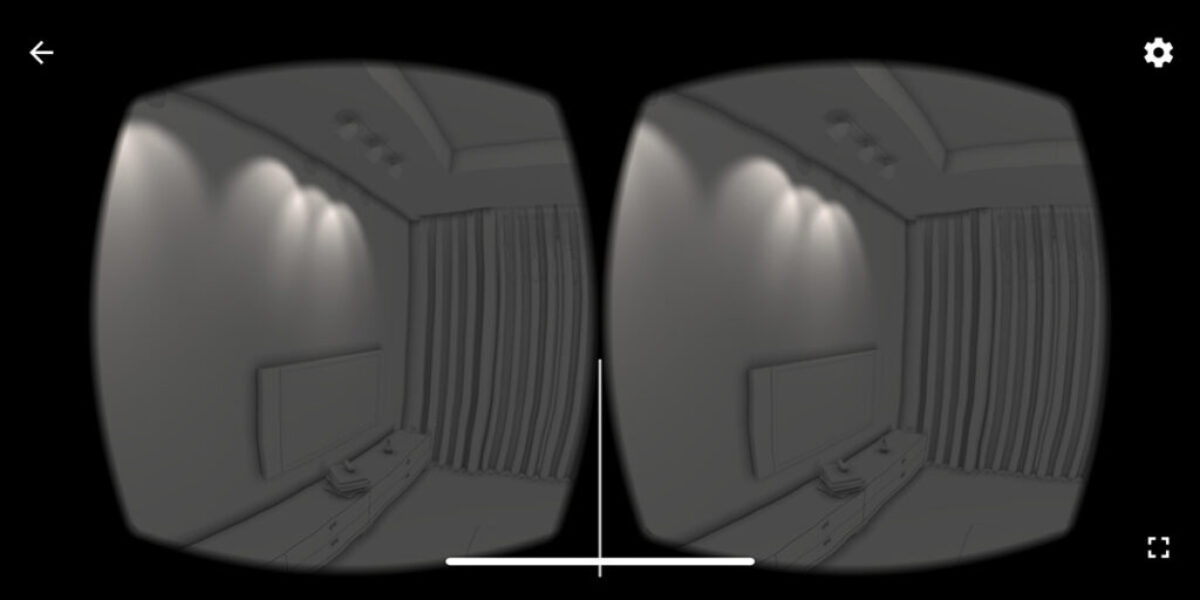

VR 360° Camera (by Fjolla Dedaj)

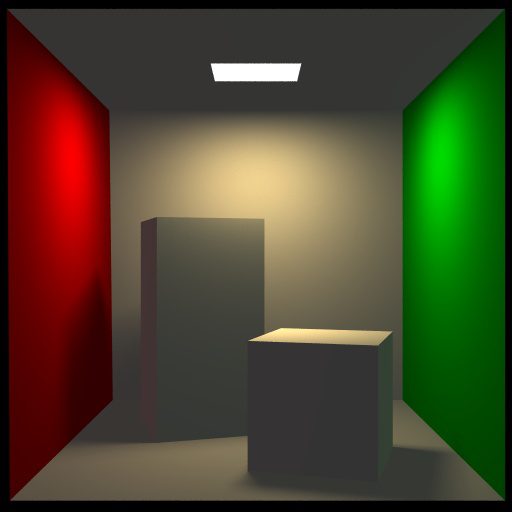

Cornell Box

Computer Graphics Course

| Lectures | Slides | Worksheets | Assignments | |

|---|---|---|---|---|

| 1 | Introduction to Ray Tracing | slides | ||

| 2 | Camera and Lens Models | slides | Worksheet 1 | |

| 3 | Ray-geometry intersection algorithms | slides | Worksheet 2 | Assignment 1 |

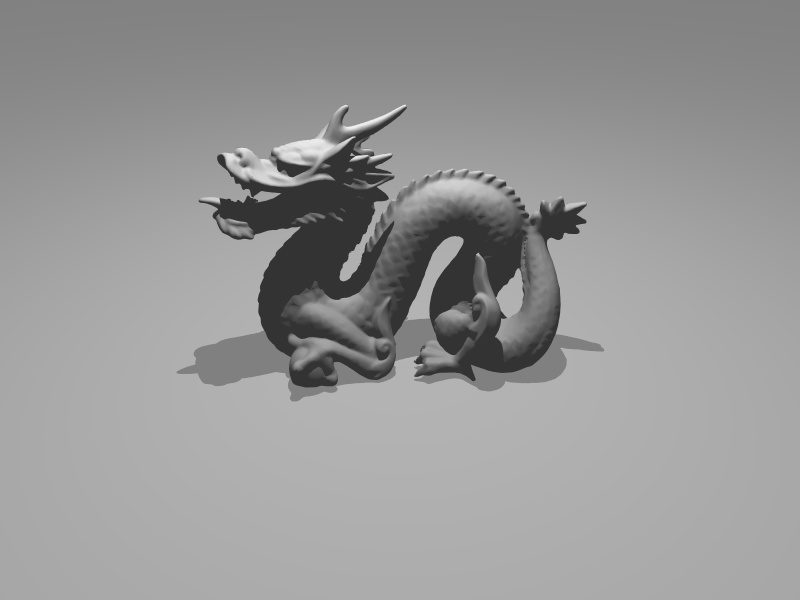

| 4 | Spatial Index Structures | slides | Assignment 2 | |

| 5 | Shading: Rendering Equation & BRDF | slides | Worksheet 3 | Assignment 3 |

| 6 | Texturing | slides | Worksheet 4 | Assignment 4 |

| 7 | Distribution Ray-Tracing | slides | Assignment 5 | |

| 8 | Transformations | |||

| 9 | Animation | Worksheet 5 | Assignment 6 | |

| 10 | Global Illumination | |||

| 11 | Human Visual System | |||

| 12 | Color | |||

| 13 | Sampling and Reconstruction | |||

| 14 | Environment camera & Virtual Reality | Worksheet 6 |

Project and Thesis Topics